Serverless development

Nowadays, the infrastructures needed for large projects have become very complex, requiring extensive knowledge and a great deal of effort for correct installation, configuration, management and maintenance.

So, in this new instalment of Inspiring Technology, our Hunters delve into serverless architectures. This new form of cloud-based software development allows you to focus on the specific parts of an application without having to worry about managing a server infrastructure with all that this entails.

Various cloud service providers, such as AWS, Google and Azure, offer all kinds of fully managed services. One example is Database-as-a-Service (DBaaS), where the service provider itself manages all you need: installation, configuration, hosting, security, maintenance... You can use it to develop applications without having to worry about infrastructure.

Function-as-a-Service (FaaS) is another cloud computing service where, once you provide the service provider with the code, it can be executed when needed. Thanks to this, developers can focus on building the specific parts of their application, which leads to increased development speed and efficiency.

Advantages and disadvantages of serverless architecture

Scalability

One of the major advantages of serverless architecture is that it allows for significant horizontal scaling. When dealing with a large number of requests, the service provider automatically instantiates more worker nodes in parallel. However, depending excessively on external third-party services can cause bottlenecks.

Conversely, it allows for scaling to zero, so when a particular service is not being used, no instance of it is kept running, avoiding unnecessary resource consumption.

Pay-per-use

Another big advantage is that, as services are only instantiated when used, service providers bill according to usage.

This way, if the application is not run during a given month, no costs will be incurred. However, the cost will increase as usage increases, so if there has been very high usage during a given month, it will be more expensive than an on-premise solution.

This is especially beneficial for applications that inherently have less workload during certain periods.

Vendor lock-in

An important aspect to keep in mind when considering developing a serverless application is vendor lock-in. This term refers to the situation of being dependent on a particular supplier.

Generally, each service provider offers its own services, each having specific features, capabilities and configurations. When you develop an application through a service provider, you become dependent on them, which leads to complications when you want to switch providers.

Performance

While scaling to zero saves costs, it can also have a major impact on performance in certain situations.

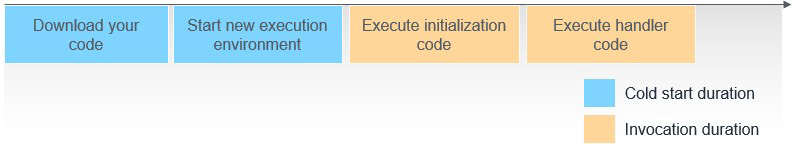

Every time a service without a running instance is executed, a new instance has to be created, downloading the necessary code and preparing the execution environment, which will considerably increase response times. This problem is known as a cold start.

Figure 1: Diagram of a cold start.

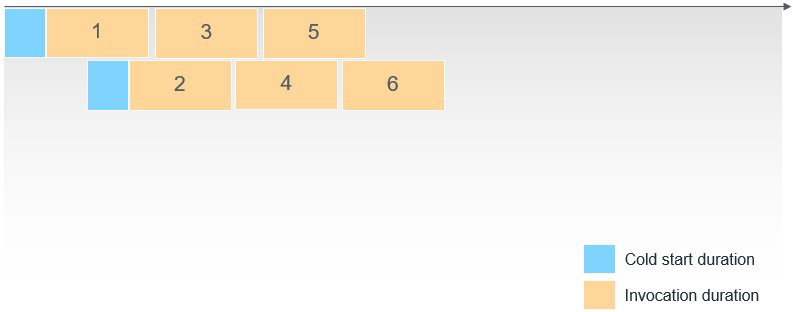

To avoid this scenario, we run sequential executions, which reuse the same container, so it only affects the time of the first execution within the container.

Figure 2: Cold start prevention diagram.

OpenWhisk

OpenWhisk is an open source platform that offers serverless execution of functions with minimal configuration. This platform offers on-premise hosting using container orchestrators such as Kubernetes, OpenShift, and Docker Compose.

With on-premise hosting you gain control over costs, along with greater independence from service providers, allowing you to switch between service providers seamlessly (you can even have no provider at all if all your services are on premise).

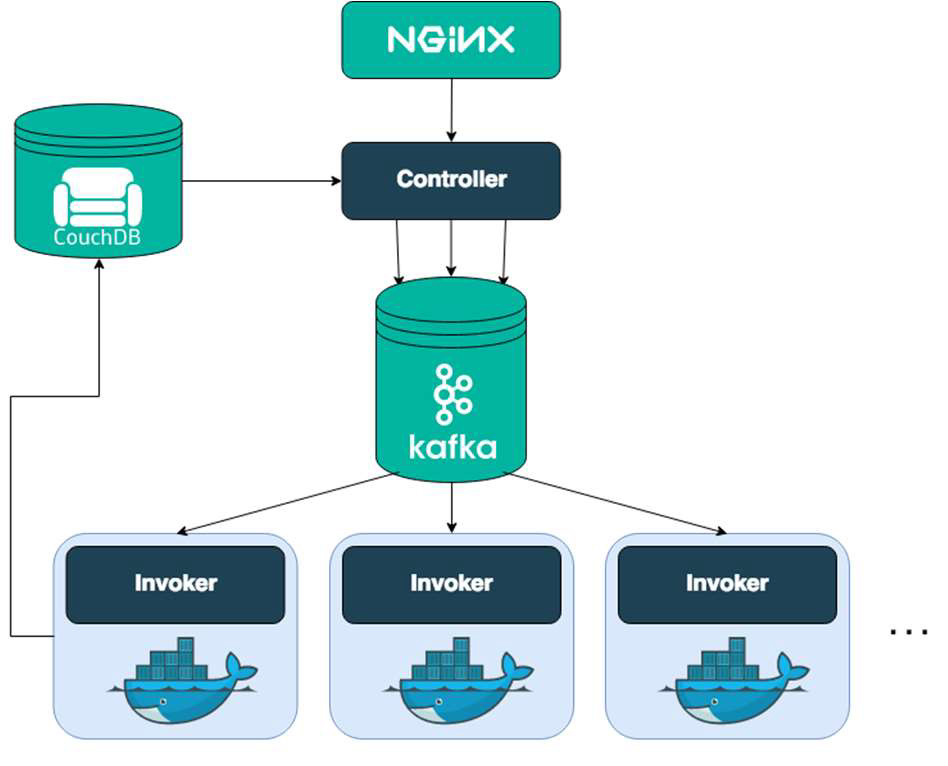

OpenWhisk requires a base infrastructure to manage the platform itself, which consumes a relatively constant amount of resources. However, the consumption of resources by the workload itself will be variable.

Figure 4: OpenWhisk infrastructure diagram.

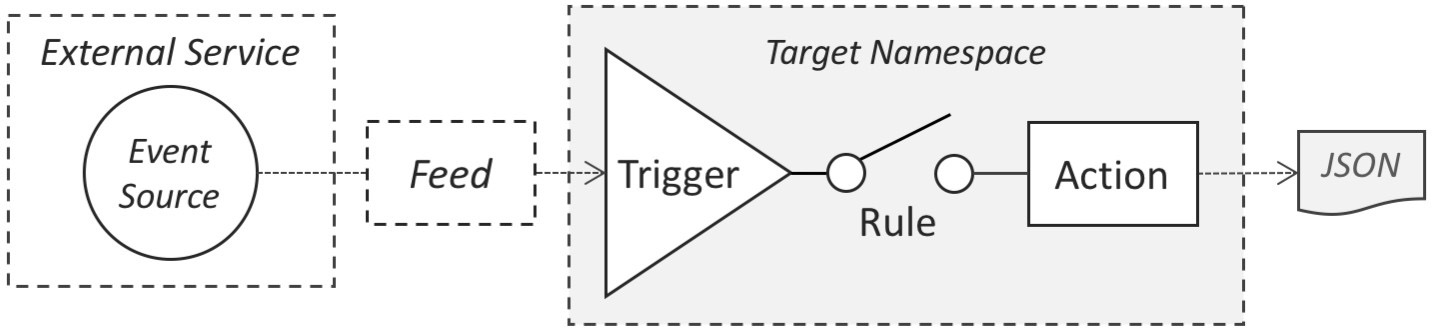

OpenWhisk works on an event-driven basis:

An event is generated from an external feed, such as an HTTP request to modify an entity in a database or a message in a Kafka broker.

This event triggers a rule, which has associated actions that will be executed, and will generate a response in JSON format.

Figure 5: Diagram of the OpenWhisk event-driven system.

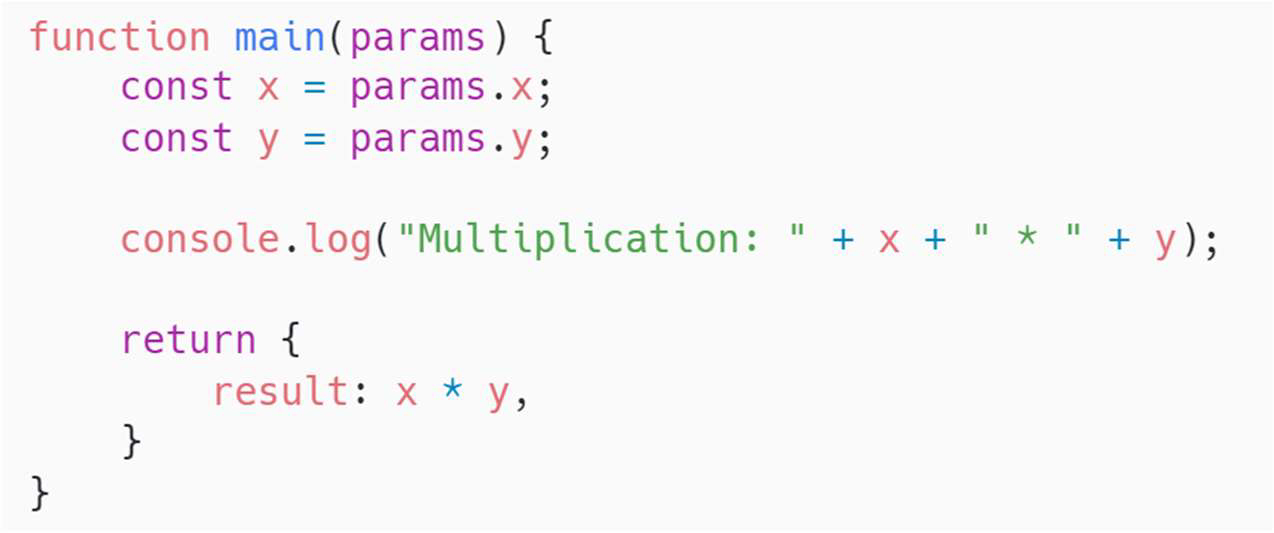

With OpenWhisk, you can easily define actions in a multitude of languages. For their implementation, a function is defined that receives a JSON object with the input arguments and returns another object with the result.

Figure 6: Example of function with JSON object.

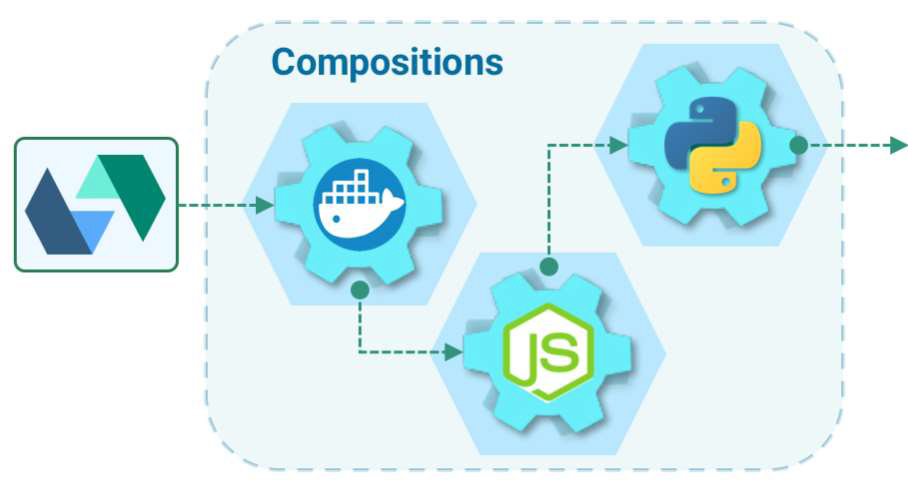

To execute an action, a container is instantiated with its associated runtime. Then it is executed and discarded when finished. In this way, only the necessary resources are used at any given time. It also allows you to define composite actions, consisting of a series of actions to be executed sequentially, where the output data of the previous function will be the input data of the next one.

Figure 7: OpenWhisk composition diagram.

OpenWhisk can listen to multiple external feeds, executing defined actions in response to, for example, a commit on GitHub or a Slack message.

Figure 8: Different OpenWhisk features.

This video shows how OpenWhisk works as a serverless option:

Want to know more about Hunters?

A Hunter rises to the challenge of trying out new solutions, delivering results that make a difference. Join the Hunters programme and become part of a diverse group that generates and transfers knowledge.

Anticipate the digital solutions that will help us grow. Find out more about Hunters on our website.